I thought I’d try a full recovery as if I had lost the disk completely. It’s currently about 400GB in all.

My first attempt failed as it took so long the next backup kicked in and after 8 hours(!), it complained there were unexpected files. OK, so that wouldn’t arise in the real situation. So I suspended backups on that destination.

Starting about noon on Thursday 4th, it then took around 8 hours to rebuild the database. I’m puzzled why you say “you don’t need database recreation to restore files should you suffer a hard drive crash”, as that is what recover does (or says it is doing, at least). There may be other methods, but if it doesn’t need it why does the primary restore do it? It actually said “Building partial temporary database… recreating database”, so maybe it didn’t do it completely, but it still took 8 hours.

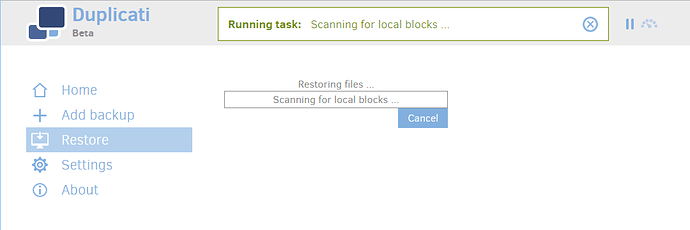

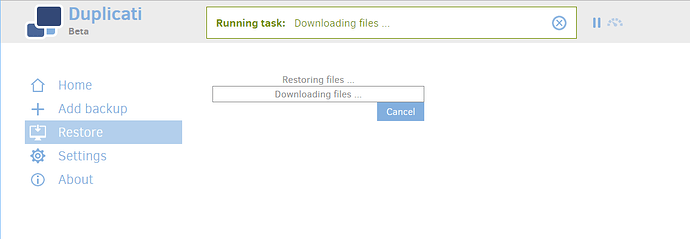

Then it said “building list of files to restore” with a progress bar and then after 20 minutes said creating folders, and then 5 mins later “Scanning for local folders” with a progress bar (image 1). This bar never advanced for the remainder of the recover, but the text did change at some point to say “downloading files” (image 2). Again the bar never advanced though, so I had no idea how far it had got through.

On Saturday I checked the target disk, and it appeared to be complete, in that the size looked about right and every file I sampled was present and correct. However the backup didn’t say complete so I left it, and it remained as image 2 all through Saturday and all through Sunday. It had then changed to say complete (the donations message) by Monday morning (according to the messages it actually completed on Monday at 03:05).

So, in all it took 87 hours to complete the restore, with no useful progress information, and in particular I don’t know what it was doing for the last 40 hours or so after it looked like it was all restored (was it maybe verifying?).

This was from a network disk on a gigabit network where both ends were constrained by USB2, which in a pure file copy would be the limiting factor. 400GB over 87 hours means about 1.3MB/sec.

I then tried a Windows directory copy on a pretty typical 110MB folder containing 8,000 files (lots of small files take a lot longer to copy than big ones). This took 371 seconds or 0.296 MBytes/sec. By that measure 400GB (at 1GB = 1000MB) would have taken about 375 hours!

So on the face of it, while 87 hours sounds like a very long time, it looks like the restore took less than a simple file copy; but as I say, it seemed like it was substantially complete after some 48 hours, so it’s not orders of magnitude different, and if the database recreation didn’t take so long (couldn’t it be uploaded with the backups in the first place?) would be pretty good…

So my conclusions:

- most importantly, it completed successfully!

- it took a long time, but copying files also takes a long time, and it isn’t comparatively massive

- it showed no progress in the progress bar, so I couldn’t tell how far it had got. This was the most difficult thing.

- for the last half-ish of the time, it didn’t appear to be doing anything!

[1]

[2]