In this post i’ll discuss the effect of Custom and Smart Retention settings on deleted files.

In short: deleted files may be gone long before you expect them to

Please note the following:

- Duplicati works as designed

- According to a long read of forum posts: many users will not expect this (but some already know)

- I could not find this in the manual pages

- Maybe i do not correctly understand the Duplicati backup model, it would be nice if an expert would comment to confirm or deny stated facts from this post

In order to understand the behavior, first we must know:

How does Duplicati makes backup’s?

- Your source is one or more directory trees, for which you want to maintain backups

- With each full and uninterrupted backup, Duplicati creates a snapshot of your complete source. This snapshot is also called backup, backup-set or version

- The snapshot essentially is a complete listing of your source, including (a reference to) its contents

- The content of the files (split in dblocks) is maintained seperately by Duplicati in volume files

- The snapshot refer to these content (hashes to dblocks) and unchanged content is only saved once

Please note that a snapshot essentially is a point-in-time listing/backup of your source. A snapshot only has knowlegde about existing objects in the source.

Ok, what does all this mean?

- For the first backup, Duplicati creates a snapshot (listing of all source objects) and saves all contents

- For an unchanged source, each Duplicati backup creates a snapshot (listing), but does not save any content, because this is not needed as it is unchanged

- For a source with changed content, only the changes are propagated. The old content is kept as long as other, older, snapshots refer to it

- For a source with deleted files, nothing special is done. The deletions are not present in the snapshot. The contents of deleted files is kept as long as other, older, snapshots refer to it

Suppose you make a backup every day for an entire year. You will have 365 snapshots, or backup-sets of versions. Can be a lot of data to backup.

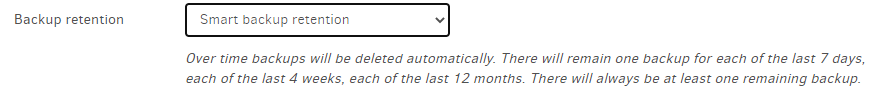

Suppose you are happy with backup thinning and you only need one backup a day for the first 7 days, one per week for the next 4 weeks and one per month for a year after that.

This can be done with a Custom Retention setting: 7D:1D,4W:1W,12M:1M (a Smart Retention is just a preset Retention Setting of this kind).

The effect is that your amount of snapshots to keep will be reduced to 7 + 4 + 12 = 23, and the oldest one wil be about 12 months plus 4 weeks plus 7 days old.

For source objects that stay present in your source all works as expected. Nothing to tell here. For source objects that are deleted this does not work as expected by me (and i fear by many others). Why is that?

Somehow, i expect that deleted files follow the Custom Retention rule as set up and that the deleted file wil be retrievable for the total time. A bit over a year in the example. But this is not how it works. Depending on how long a file existed before it was deleted it can be retrievable as expected, or be gone after a week!

Worst case:

- One backup each day and a Custom Retention rule of 7D:1D,4W:1W,12M:1M

- Create a file and run a daily backup. The file now exist in one snapshot/backup-set/version (version 0f, * means it contains the file)

- Next day, delete the file and backup. All backup’s from this day on do not contain the deleted file. We now have two snapshots (0,1f)

- After 6 more days, we have 8 snapshot and backup thinning kicks in. But there is only one snapshop in the week bin, so i think all 8 are still kept (0,1,2,3,4,5,6,7f)

- After another day, we have 9 snapshots. 7 for the first 7 days and 2 for the week after that (0,1,2,3,4,5,6,7,8f). I think the very oldest snapshop is now deleted.

Conclusion: after nine days your deleted file is lost forever.

Please note that i do not fully understand snapshot selection during thinning. I could be that in this example the oldest snapshot is kept. If so, add an extra day and backup before stap 2.

The issue here is that short lived files can be lost before you know. Short lived means shorter than the largest thinning cup, in this example one month. The reason is that thinning only looks to snapshot dates, nothing else. Again: Duplicati works as designed.

For me, retrieval of short lived files must be possible in my total backup retrieval period, say one year. Deleted files must be retrieval like all other files. For this use case, using a Smart or Custom Retention rule is not possible. The solution simply is to use an other rule (Delete backups that are older than).

I wonder if this aspect was on the radar when custom retention rules where designed. I feel they are only usable for very advanced users.

So please be aware of the effect of Smart or Custom Retention rules if you care about deleted files!