No clues for anybody if you don’t interpret or post them. Any recollection? Any logs?

{

"RestoredFiles": 606169,

"SizeOfRestoredFiles": 415573583119,

"RestoredFolders": 88816,

"RestoredSymlinks": 0,

"PatchedFiles": 0,

"DeletedFiles": 0,

"DeletedFolders": 0,

"DeletedSymlinks": 0,

"MainOperation": "Restore",

"RecreateDatabaseResults": null,

"ParsedResult": "Error",

"Interrupted": false,

"Version": "2.0.8.1 (2.0.8.1_beta_2024-05-07)",

"EndTime": "2025-09-08T00:36:18.405138Z",

"BeginTime": "2025-09-06T16:39:26.2294286Z",

"Duration": "1.07:56:52.1757094",

"MessagesActualLength": 15058,

"WarningsActualLength": 18,

"ErrorsActualLength": 179,

"Messages": [

...

],

"Warnings": [

"2025-09-06 11:40:59 -05 - [Warning-Duplicati.Library.Main.Operation.FilelistProcessor-MissingRemoteHash]: remote file duplicati-b50688453e5d24dd7810fc828394df504.dblock.zip.aes is listed as Verified with size 1048576 but should be 52421181, please verify the sha256 hash \"mfq32T01sdofr6xC6KCFXfsnXeRjbrWY7snym4yg3z4=\"",

... (two more like this ^)

"2025-09-07 17:05:11 -05 - [Warning-Duplicati.Library.Main.Operation.RestoreHandler-MetadataWriteFailed]: Failed to apply metadata to file: \"D:\\backup restore 2025-09-06\\billysFile\\Graphical\\DIGITAL CAMERA\\2024-10-23\\Picks\\PXL_20241026_010322411.MP.jpg\", message: Could not find file '\\\\?\\D:\\backup restore 2025-09-06\\billysFile\\Graphical\\DIGITAL CAMERA\\2024-10-23\\Picks\\PXL_20241026_010322411.MP.jpg'.\r\nFileNotFoundException: Could not find file '\\\\?\\D:\\backup restore 2025-09-06\\billysFile\\Graphical\\DIGITAL CAMERA\\2024-10-23\\Picks\\PXL_20241026_010322411.MP.jpg'."

... (A bunch more like this ^)

],

"Errors": [

"2025-09-06 12:47:41 -05 - [Error-Duplicati.Library.Main.AsyncDownloader-FailedToRetrieveFile]: Failed to retrieve file duplicati-b4f228ccfe68540c1a505bc9b73dbf3f9.dblock.zip.aes\r\nCryptographicException: Failed to decrypt data (invalid passphrase?): Message has been altered, do not trust content",

"2025-09-06 12:47:41 -05 - [Error-Duplicati.Library.Main.Operation.RestoreHandler-PatchingFailed]: Failed to patch with remote file: \"duplicati-b4f228ccfe68540c1a505bc9b73dbf3f9.dblock.zip.aes\", message: Failed to decrypt data (invalid passphrase?): Message has been altered, do not trust content\r\nCryptographicException: Failed to decrypt data (invalid passphrase?): Message has been altered, do not trust content",

... (one more of these ^)

"2025-09-06 13:14:07 -05 - [Error-Duplicati.Library.Main.AsyncDownloader-FailedToRetrieveFile]: Failed to retrieve file duplicati-b4f2737dba4454f25bc8e9e3ff709204f.dblock.zip.aes\r\nCryptographicException: Invalid header marker",

... (one more of these ^)

"2025-09-06 18:35:15 -05 - [Error-Duplicati.Library.Main.AsyncDownloader-FailedToRetrieveFile]: Failed to retrieve file duplicati-b852168eebb434eb5b32f7d1c0b2ccaec.dblock.zip.aes\r\nCryptographicException: File length is invalid",

... (one more of these ^)

"2025-09-07 18:37:14 -05 - [Error-Duplicati.Library.Main.Operation.RestoreHandler-RestoreFileFailed]: Failed to restore file: \"D:\\backup restore 2025-09-06\\ok\\Classic Rock\\Top\\chill\\Beach Boys - California Dreamin'.mp3\". Error message was: Failed to restore file: \"D:\\backup restore 2025-09-06\\ok\\Classic Rock\\Top\\chill\\Beach Boys - California Dreamin'.mp3\". File hash is F3xohu6RNn1rND6TUDSXN75jVRPY0sbROXyQuBhnAzc=, expected hash is 4Tkgf9UzdnyxI9rkpXnAaZFAFH15Za+4h98spiE1ZZs=\r\nException: Failed to restore file: \"D:\\backup restore 2025-09-06\\ok\\Classic Rock\\Top\\chill\\Beach Boys - California Dreamin'.mp3\". File hash is F3xohu6RNn1rND6TUDSXN75jVRPY0sbROXyQuBhnAzc=, expected hash is 4Tkgf9UzdnyxI9rkpXnAaZFAFH15Za+4h98spiE1ZZs=",

... (a number more similar to this ^)

],

"BackendStatistics": {

"RemoteCalls": 7527,

"BytesUploaded": 0,

"BytesDownloaded": 389195677785,

"FilesUploaded": 0,

"FilesDownloaded": 7501,

"FilesDeleted": 0,

"FoldersCreated": 0,

"RetryAttempts": 20,

"UnknownFileSize": 1570,

"UnknownFileCount": 1,

"KnownFileCount": 15763,

"KnownFileSize": 421077134544,

"LastBackupDate": "2025-08-31T16:00:00-05:00",

"BackupListCount": 483,

"TotalQuotaSpace": 4000634109952,

"FreeQuotaSpace": 452027482112,

"AssignedQuotaSpace": -1,

"ReportedQuotaError": false,

"ReportedQuotaWarning": false,

"MainOperation": "Restore",

"ParsedResult": "Success",

"Interrupted": false,

"Version": "2.0.8.1 (2.0.8.1_beta_2024-05-07)",

"EndTime": "0001-01-01T00:00:00",

"BeginTime": "2025-09-06T16:39:26.2294286Z",

"Duration": "00:00:00",

"MessagesActualLength": 0,

"WarningsActualLength": 0,

"ErrorsActualLength": 0,

"Messages": null,

"Warnings": null,

"Errors": null

}

}

After loss of drive, there is no backup job to go to. Did you do job import or recreate?

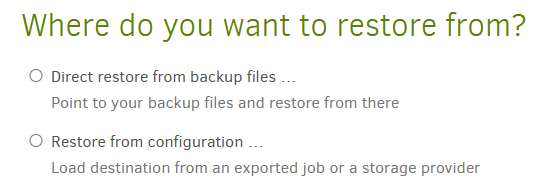

I had a backup job that was backing up my main external hard drive to another external hard drive. The main one failed. My laptop itself is completely fine. So I just went to the backup job in the UI and selected “restore files” and selected the two folders that came from my main external (omitting the one folder from my laptop harddrive, since I don’t need that restored).

What is Destination type? Can you verify size?

The destination (I assume this is where its backing up to) is an external hard drive. I’m not sure what you mean by “can you verify size”, size of what? If you mean the size of the lost files, not really. Hard drive is in too poor of a shape to count all the files. I know the drive in total has about 475GB so thats sorta in the ballpark of the 392GB the backup says it has. I also didn’t backup the whole drive, just the two main folders in that drive (any top level hidden files or other invisible stuff I didn’t backup).

Is there an actual message or screenshot?

The relevant messages are all above.

Your numbers were also far higher earlier

Yeah, after restoring the whole backup, I switched to attempting to store the individual files that I was given warnings and errors about.

If you verify a file and it’s good, then it’s good.

I meant that I can verify that the file is there and has the right size. Does duplicati provide a proceess for verifying the contents/hash of all the files? I’d love to run that verification process if possible. I believe I saw someone mentioned that this is run automatically after a restore, but a double check would give good peace of mind.

The AFFECTED command to see what source files were affected. Below that are shown list-broken-files and purge-broken-files

I will try that later

Most guaranteed way of testing if file is damaged is to unzip (if a zip) or decrypt (if .aes). AES Crypt is a third-party GUI tool, and Duplicati ships SharpAESCrypt which runs in CLI.

That’s a great idea. I will try that later as well.

Thank you for the help, btw!