There should be a dindex for each dblock. The prefix is fine but it removes hope of mere bad names.

When you delete a version, the database and remote reflect that. For the remote, that’s a dlist delete.

Deleting all versions should be pretty hard to do ordinarily, because of the allow-full-removal safeguard:

--allow-full-removal = false

By default, the last fileset cannot be removed. This is a safeguard to make sure that all remote data is not deleted by a configuration mistake. Use this flag to disable that protection, such that all filesets can be deleted.

In the case of dlist files that Duplicati doesn’t “know” about (stale database), possibly those are deleted during Repair. tt generally deletes unknown files, and I’m not sure dlist files are exempted from deletion.

This seems unlikely because you didn’t go far back on the database, but I’ll ask…

What was your Backup retention setting before? If prior runs deleted files older than your restored DB, and Repair deleted files newer than DB, that might explain why there are no backup versions dlist files.

If that’s not it, then I don’t know why you have no dlist files. It’s common with an interrupted first backup, and there’s an experimental method to recover a bit from that and avoid reuploading all of the data blocks.

History is lost though, because the database and dlist files were the only places that knew old versions.

If none of the destinations have dlist files (example name duplicati-20210310T182943Z.dlist.zip), then Recreate won’t have a file list to Recreate. Duplicati can ordinarily recreate missing dlist files if the backup information it needs is in the database, but using an old database throws in a mismatch wrinkle…

If you really want to, you can look in the old database restore with sqlitebrowser to see what dlists it has. Creating a bug report and posting that for someone else to look at Remotevolume table would also work…

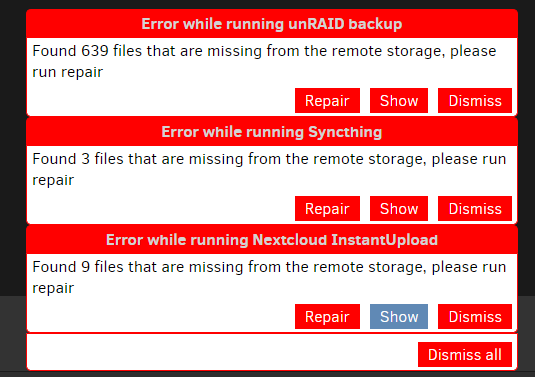

That also inteferes a bit with my reading of the chronology (thanks for providing), but it seems now instead of extra unknown files you somehow flipped over to the opposite situation of missing files. Those are fairly small numbers of missing files. You could watch About → Show log → Live to see the exact missing files. Maybe one will be a dlist, and looking at its filename will go with your recall of history to find error source.

I don’t use Docker, but I guess you know that keeping Duplicati data in a container complicates changing containers. Maybe that’s why you had to copy things back in after changing containers in your sequence?

What does “no activity” mean? No backup run, so DB still matches remote? No changes of source files?

Does restore from current version (which sounds sort of strange by itself) refer to “backed up the current version” earlier, before at least one backup (or maybe all three) was run? A DB revert seems like it should still be complaining about files it hasn’t seen. Somehow it looks like your DB got ahead of your destination. however you won’t know for sure until you see those file names, preferably dlists with recognizable dates.

Although it would be nice to figure out the exact sequence and what might have gone wrong at what point, presumably you want backups working again. I don’t know how much you care about your older versions, or the amount of data uploaded again to S3. Can you comment on your priorities, to try to steer next step?

It might also be possible to focus on getting going again, while preserving old data to try to look at later… Although it sounds like you don’t have log files, having old databases should reveal most remote activities. RemoteOperation table would be where you could likely see when and how lost dlist files were deleted.

Paging through the job’s Remote log works, but is harder. Example delete that my Backup retention did:

Mar 11, 2021 2:43 PM: delete duplicati-20210310T194001Z.dlist.zip.aes