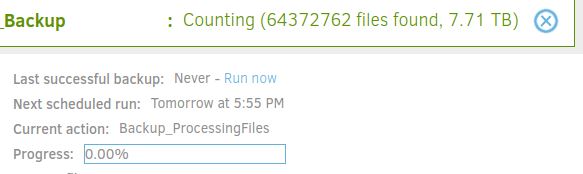

I am using duplicati on linux. I am backing up folders with symlinks. I have set the symlinks to be stored in my backup settings. The drive shows 1.7TB in us but when the backup runs it continues to find files for days. It is over 7TB in found files. How can this be corrected? I’m not sure I will ever get a backup as it stands.

My first guess is that you have a recursive symlink somewhere. But you say the --symlink-policy option is set to “store”? If so that shouldn’t happen.

I did a quick test by intentionally setting up a recursive symlink and wasn’t able to reproduce a problem as long as --symlink-policy was set to “store” (which is the default).

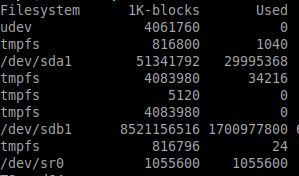

Initially the flag was not set. After seeing the files grow to 8tb I checked and confirmed the default was store. To be sure I manually set the flag to store and started the backup again. The files found is approaching 8tb again. Here is a screenshot of the file system. As you can see there is less than 2tb in use. I’m not sure what to check to clear this up.

What is shown if you go to the Duplicati Web UI, click About, click Show Log, click Live, and change the dropdown to Verbose? (Or maybe Profiling)

Can you see if there’s any sort of filesystem scan loop?

What filesystem? There are some reports of issues with copy-on-write filesystems. However, there is also a report of a similar issue on ext4: