Did you try Duplicati.CommandLine.RecoveryTool.exe on it as described here (no result reported then)? Possibly you still have a (stale) backup in Google Drive, if the external drive backup is partially damaged.

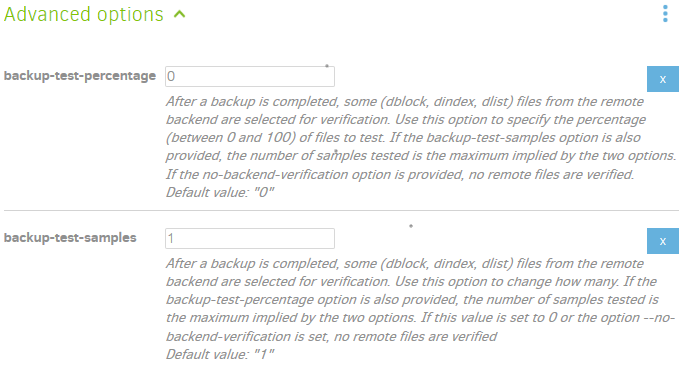

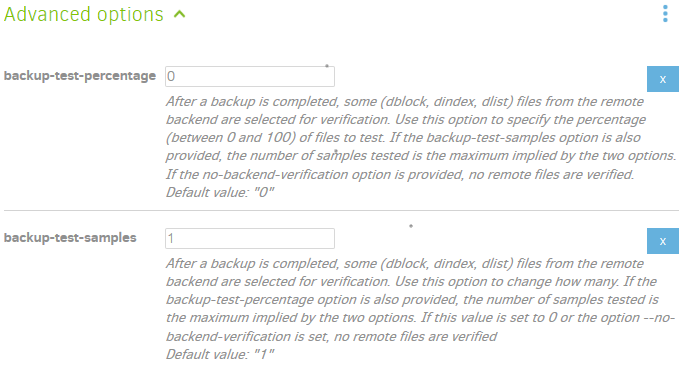

Verifying backend files describes the default self-check against corrupted backup files. It’s configurable or can be turned off in several ways (please don’t turn it off). –backup-test-samples can be set higher to get increased coverage. There’s also a new option where you can set the percentage of the files to be tested:

Those are the checks you’d run routinely. If you’re in a hurry and want to wait for a thorough all-files test, The TEST command can be run from UI Commandline after changing Command dropdown to test, and Commandline arguments to all, which changes the sample size so that all backup files will get tested. This will take awhile, but is probably more tolerable with a hard disk than a huge amount of downloading.

A second way to test everything is to set upload-verification-file option, run backup, then use the new file. Your Duplicati installation folder (C:\Program Files\Duplicati 2 for base install, or look in C:\ProgramData\Duplicati\updates if you have updates) has a utility-scripts folder with DuplicatiVerify.ps1 that can be run with an argument of your Duplicati backup folder path. That will verify all files against records.

For any damaged file, you can use The AFFECTED command, passing its simple filename in similarly to how the test command was done, and this will say what source files were affected by that particular file.

For any damaged files, it would also be useful to know the exact file size and the modified date. That may provide clues as to whether an old file had hidden damage that escaped sampled verification, or if a new file got damaged. It used to be a requirement, for example, to use Safely Remove Hardware tool before unplugging the USB drive. Handling changed in Windows 10 version 1809 but I still use the special icon…

Older Duplicati versions also had a bug that could sometimes make bad files if speed throttling was used. Eventually this should have been noticed by the sampled testing. Can you look at a backup log for section

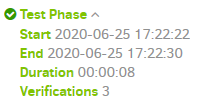

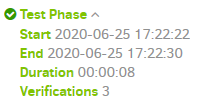

that results from a sample size of 1 (set of 3 files). It’s the same hash check that failed in the original post.

In addition to hash checks, there’s no substitute in the “trust that my backups are good” goal for an actual occasional restore test to some test folder. For best test, set the option –no-local-blocks, which will force Duplicati to download backup files for the restore even if blocks are readily available from original sources.

There’s more that can be done, if desired, but for critical files it’s best to run several independent backups.