Environment info

- Duplicati version: 2.0.5.114_canary_2021-03-10

-

Operating system: docker

duplicati/duplicati:2.0.5.114_canary_2021-03-10 - Backend: mega.nz

Description

I tried to use the destination backup mega.nz but:

- from web interface I follow the steps in the web wizard tool when I press “test the connection” after a while I receive: “Failed to connect: API response: ResourceNotExists”

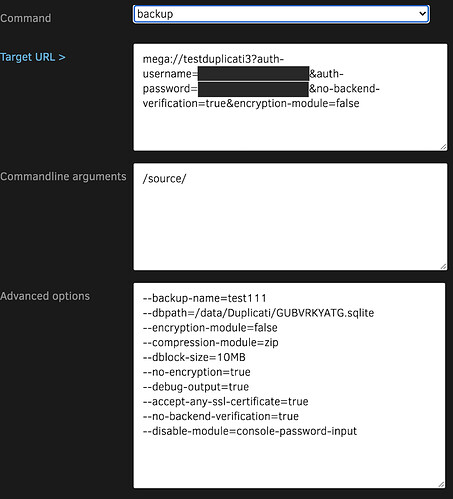

- from cli (in docker container) i run:

duplicati-cli backup "mega://XXXXXXXXXXXX?auth-username=YYYYY@ZZZZZ.HH&auth-password=WWWWWWWWWWWWWWW" /backups/ --no-encryption --upload-verification-file=false --dblock-size=10mb --debug-output=true

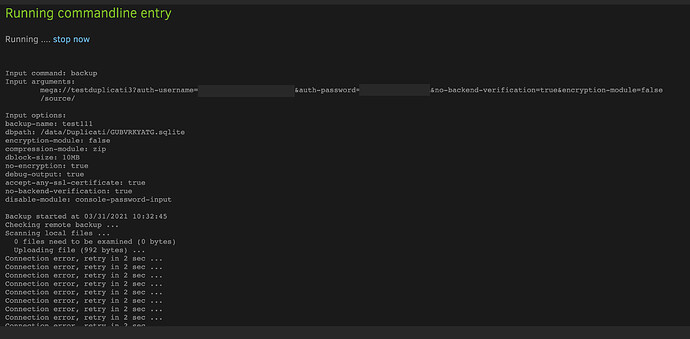

I receive the message: Checking remote backup ... Listing remote folder ... but nothing happens

Problem

I tested mega login:

- with an empty user (no data inside) => NO PROBLEM work fast

- with a user with ~800Gb of data => PROBLEM docker exit code 137

Duplicati run correctly the operation.

I monitor the duplicati container with docker stats and iftop, I see that the process running and download correctly the data from mega api endpoint.

But after a while docker container terminate: Exit Code 137 => Indicates failure as container received SIGKILL (Manual intervention or ‘oom-killer’ [OUT-OF-MEMORY])

docker stats duplicati output:

CONTAINER ID NAME CPU % MEM USAGE / LIMIT MEM % NET I/O BLOCK I/O PIDS

05b61e17ba72 duplicati 21.72% 2.797GiB / 7.772GiB 35.98% 281MB / 6.64MB 259MB / 94.2kB 30

After this docker stats output docker container terminate with exit code 137

Suggestions/Tests

Is possible to enlarge /usr/bin/mono-sgen process memory ?

I found this: https://stackoverflow.com/a/19375857 is this a possible solution?

I had modify the duplicati-server file in the docker images:

#!/bin/bash

EXE_FILE=/opt/duplicati/Duplicati.Server.exe

APP_NAME=DuplicatiServer

export MONO_GC_PARAMS=max-heap-size=500m,mode=balanced

export MONO_LOG_LEVEL=debug

exec -a "$APP_NAME" mono "$EXE_FILE" "$@"

to add MONO_GC_PARAMS to test if is possible to limit the amount of memory.

I tested also this configuration:

export MONO_GC_PARAMS="max-heap-size=1500m,nursery-size=128m,soft-heap-limit=600m"

I map the file in the docker-compose file as follow:

version: "3"

services:

duplicati:

image: duplicati/duplicati:2.0.5.114_canary_2021-03-10

container_name: duplicati

environment:

- TZ=Europe/Rome

volumes:

- ./data:/data

- ./backups:/backups

- ./source:/source

- ./duplicati-server:/usr/bin/duplicati-server

ports:

- 8200:8200

deploy:

resources:

limits:

memory: 2500M

reservations:

memory: 1500M

The results are the same.

Docker exit code is 137 after mega started to elaborate the downloaded information form api mega site.