Hi there

I’m currently running 2.0.7.1_beta_2023-05-25 and just now received the report, that one of my backups failed.

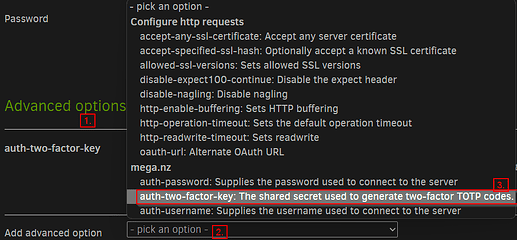

It’s a backup of a local directory to Mega (with the “new” MFA option set). On Friday (2 days ago) it worked without a problem, now it doesn’t.

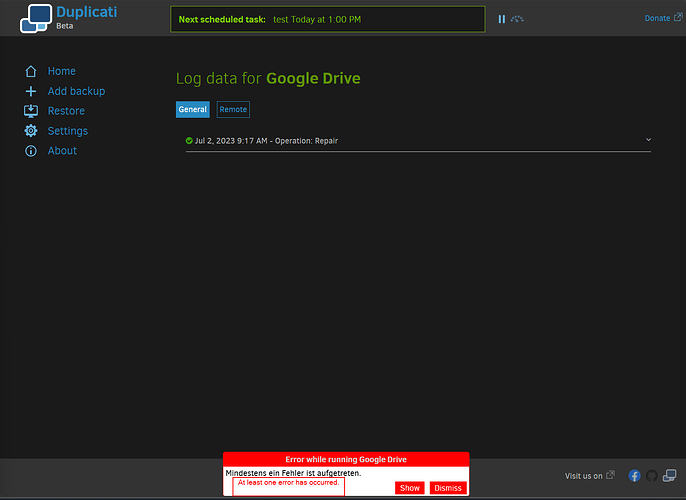

Just a few minutes ago I received the error message “At least one error has occurred.” Unfortunately, there is no entry in the log for the backup-attempt at all.

I created a third backup to the same mega and it works without a problem.

I tried to verify files, repairing the database, recreating the database and all of them ran without any errors.

I tested around quite a lot and the problem seems to be linked to 3 video files. Without them in the source, the backup works. The files are 423MB, 353MB and 387MB of size.

It’s no problem related to the left storage of the account I used, I tried with my other account that has 30GB of storage left too, same problem.