I just started with Duplicati and it looks very nice. I’m getting this error and I’ve looked up issue #1400 but the cause is a bit cryptic. I’m using the Docker image, and have set it up to backup Ubuntu data in a volume (bind mount) to my NAS using FTP. The specific errors I got are listed below, but is there some guidance on what these mean? In the warning below, “/source” is where I store all the mapped volumes for my docker containers, including Duplicati, so it makes sense it’s having trouble backing up its own database. But what do the errors actually mean? Are they a result of Duplicati trying to backup itself too?

Warnings: [

2019-08-21 18:34:12 +10 - [Warning-Duplicati.Library.Main.Operation.Backup.FileBlockProcessor.FileEntry-PathProcessingFailed]: Failed to process path: /source/duplicati/data/Duplicati/73688870807967659085.sqlite-journal

]

Errors: [

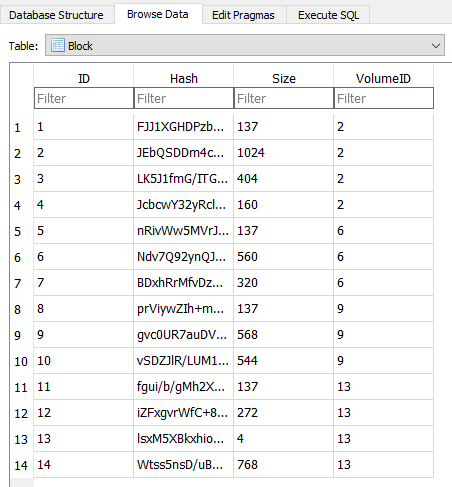

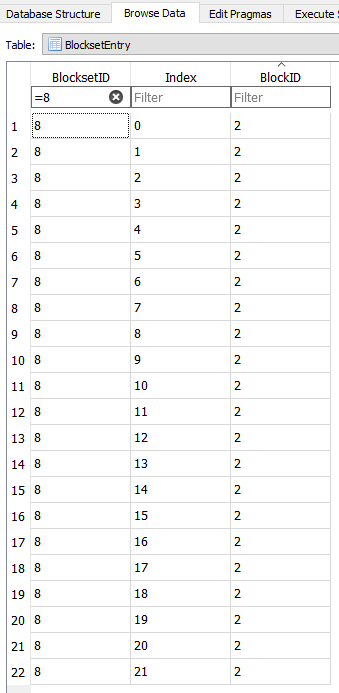

2019-08-21 18:34:12 +10 - [Error-Duplicati.Library.Main.Database.LocalBackupDatabase-CheckingErrorsForIssue1400]: Checking errors, related to #1400. Unexpected result count: 0, expected 1, hash: mu/BJh6mwPKcSq45jD7O1MaQtJt5p7qOo0Umtte8180=, size: 102400, blocksetid: 60533, ix: 2, fullhash: PDEDx9rze7wipdFK20Ckff59nXRtMSAROeu7rxIJUSQ=, fullsize: 423632,

2019-08-21 18:34:12 +10 - [Error-Duplicati.Library.Main.Database.LocalBackupDatabase-FoundIssue1400Error]: Found block with ID 106527 and hash mu/BJh6mwPKcSq45jD7O1MaQtJt5p7qOo0Umtte8180= and size 77448

]