Hello everyone!

New user of duplicati, and very impressed so far. I’ve encountered a problem that could use your help, searching the forums has not led to an answer. One of my backup jobs is repeatedly backing up files that I have not touched in months. I turned on verbose logging, and the files are logged similar to the below:

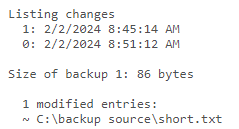

2024-01-31 18:28:13 -05 - [Verbose-Duplicati.Library.Main.Operation.Backup.FilePreFilterProcess.FileEntry-CheckFileForChanges]: Checking file for changes U:\Movies\Finding Dory (2016).mkv, new: False, timestamp changed: False, size changed: False, metadatachanged: True, 1/1/2021 6:22:24 PM vs 1/1/2021 6:22:24 PM

2024-01-31 18:32:48 -05 - [Verbose-Duplicati.Library.Main.Operation.Backup.FileBlockProcessor.FileEntry-FileMetadataChanged]: File has only metadata changes U:\Movies\Finding Dory (2016).mkv

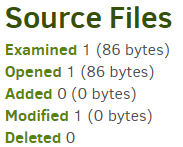

After this, the file is one of the “48265 modified entries:” listed under output of the compare commandline function.

Looks like duplicati is detecting a metadata change, even though I am certain that this file (and hundreds of similar files) have not been touched in ages, neither have their parent directories. I suspect this may have something to do with the fact that the files live on a ubuntu server that is mapped to my windows 11 computer as a shared drive (U:). Duplicati runs on the windows computer.

Perhaps using --disable-filetime-check will resolve the issue, but I think it will significantly prolong backup times. Are there any other potential solutions?

Thanks!