Does Duplicati have the consistency to back up the Postges database online?

If you can get PostgreSQL to dump to files, those can be backed up. It sounds like the SQL dump method should work, and could be started from a Duplicati run-script-before, but you can read the manual yourself.

File system level backup online seems ruled out, and WAL archiving sounds complicated but you can look.

Duplicati has no special support for PostgreSQL as far as I know, but it can back up files, preferably ones not changing in middle of file’s backup, though there’s a chance Duplicati will work if PostgreSQL is willing.

I understood, in short, do not use duplicati, I will look for another method, thank you!

Not exactly. I think the SQL dump method will work online. The WAL archiving one might. The file system level backup can’t be done online. There are only three methods defined by PostgresSQL. Good luck with

and please report back if somebody has devised something that the PostgreSQL manual doesn’t know of.

Or maybe somebody has a better built-in integration (without requiring help) with their SQL dump method.

@talesam I’m wondering if the backup without dump would work with PostreSQL.

I’m using a few proprietary databases with “backup mode”. What backup mode does, is that it basically flushes everything it can from WAL to the database, and then locks the database, until backup is done.

This means that the database is consistent during the backup and the WAL file keeps growing. And it doesn’t require full copy of the database to be produced for backup purposes alone. Very important feature with systems where the databases are huge.

Afaik, this is something which should work with any WAL mode database. SQLite, MS SQL Server and so on. I don’t remeber directly if Postgres used separate WAL file or is the data actually written to the database directly due to MVCC.

In short, just make sure the data being backed up is consistent during the backup, and you’re good.

I will use a script to run before running the duplicati to make the bank’s bkp, I think this will work better.

Do I configure this in the docker-compose or in the duplicati?

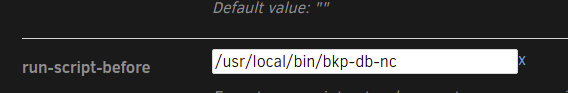

--run-script-before

I don’t use Docker, but The Compose Specification doesn’t look relevant. If you have an idea, what is it?

You want to run the script before every backup, I assume, so --run-script-before seems like it does that.

I don’t know if I did it right, I put the path of my host executable in the duplicati interface, I don’t know if it will work.

What exactly is this “host executable”?

EDIT:

Chapter 25. Backup and Restore (or equivalent for whatever version you have) are your options.

25.1. SQL Dump is what you’re trying to do. Also make sure you understand how to do restores.

I already solved it, thanks.

I set up a directory on my docker-compose.yml to set it up in ./volumes/scripts:/usr/local/bin and put my bank dump script inside the scripts directory.

it looks like the run-script-before option doesn’t work.

Please clarify what you are seeing. No script run, or you’re not getting the PostgreSQL part, or what?

EDIT:

The easiest approach is probably to separate the issues for initial testing. Make the script, get it right.

Run it by hand as the Duplicati user. Time it. If it’s over 60 seconds, increase run-script-timout some.

Maybe have it log some status info to know where it is. Make sure you set the exit code appropriately:

It’s not running the script, but I set it up on systemctl and it’s working fine.

As shown in the image above I choose the option to run before bkp and put the script path.

However, my Duplicati is in docker, so I created a directory that points to the directory I specified on the Duplicati web part. I accessed the container and the script was there, in the right directory, /usr/local/bin/my-script but it didn’t run.

I know of no problem of this sort. Can you check that ls -lu /usr/local/bin/my-script is unchanged, that access for the user that Duplicati server runs as exists, that execute permission is set up, and so on? Although I don’t use Docker, I think you could probably try running the script inside the container, for testing. Some scripts won’t be runnable in a container because the interpreter they run in (first line) is not available.