TLDR: Would it be possible implement an option to chose different storage classes for the indexing files and data files?

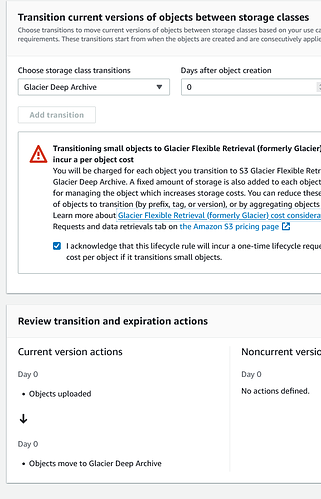

I’m trying to find the best/ cheapest storage solution for a cloud backup of my NAS and have looked at AWS S3 Glacier. I have read multiple treads that advise against using Glacier and Deep archive and i’m aware of the difficulties of recover the files and that a hot storage would be better but this is a last resort backup if my other backups fail and i’m looking for the cheapest alternative. I have experimented with life cycle rules but as i understand moving from standard storage class can be done earliest 30 days after uploading the file.

Would it be possible to add an option to chose different storage classes for the index files and the data files in the AWS S3 Bucket? When uploading the index files would go to the standard storage class while the data files would go directly to the deep archive storage class?

With no-auto-compact and no-backend-verification set to true, Duplicati would not be needed to access the files stored in the deep archive storage class.