The error message you included was due to aborting the process, so it doesn’t provide any insight into what might (or might not) have been going on.

Suggestions

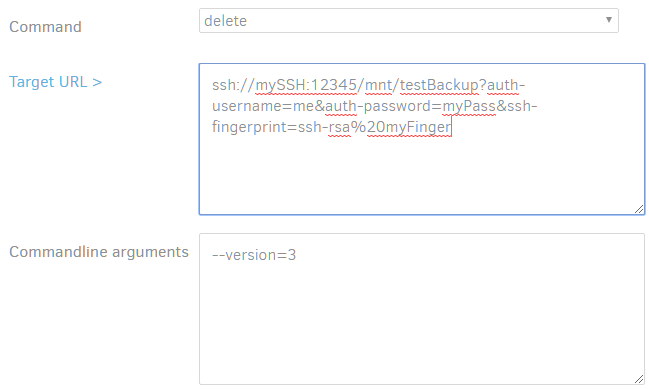

Firstly, I’d suggest trying the delete again but including the --no-auto-compact=true parameter. This turns off a bunch of behind the scenes cleanup steps that you can read more about below (if you care).

Secondly, I’d suggest that you try running the delete again and then opening a 2nd browser window to the Duplicati GUI (likely something like http://localhost:8200/ngax/index.html#/log) so you can check the “Live” log in “Information” or “Profiling” mode. This should let you know about each specific step Duplicati is taking, even if it doesn’t result in a GUI progress update.

Read on if you care about the guts going on behind the scenes that might actually be making the delete take so long.

What goes on behind the scenes

Keep in mind that Duplicati doesn’t store raw files that can just be deleted - it chops files up into smaller blocks (of size --blocksize, default 100K) and stores the blocks (if not already in a backup) in an archive file (of size --dblock-size, default 50MB) OR stores a reference to an existing same-content block (if found in an existing backup).

All these block references are stored in the local .sqlite database file, so at it’s basic level a delete is really just going through the database, finding all the block references for a particular backup version (in this case #3) and flagging them as “deleted”.

What happens next varies depending on other setting in your config, but typically if a dblock (archive) file is found to have at least X% (`–threshold, default 25%) of the blocks stored in it flagged as deleted then the archive file is downloaded, uncompressed, “deleted” flagged blocks deleted, then the remaining content re-compressed, and the resulting file uploaded.

After that, if dblock (archive) files are found to be smaller that size X (--small-file-size, default 20% of dblock-size) they are downloaded, uncompressed, merged, re-compressed into fewer ‘more fully utilized’ files, and then uploaded.

So there are a number of areas that could have caused apparent stalling in the code:

- if you have a LOT of records in the local sqlite file it can take a while to run all the commands identifying deleteable blacks

- if the flagging of blocks as deleted causes a lot of dblocks (archives) to become sparsely populated it could take a while to download, re-compress, and re-upload them

- if the re-compression of dblocks (archives) causes multiple dblocks to become “too small” it could take a while to download, merge, re-compress, and re-upload them