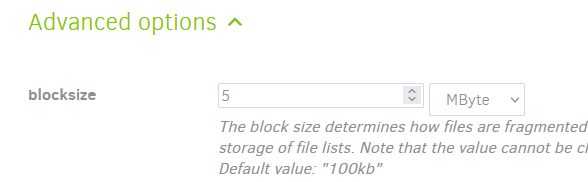

For a 5TB backup expected to grow, I’d probably set the blocksize to 5-10MB. This setting must be in place before the first backup, and it cannot be changed later:

I’m not familiar if Google Drive has a file count limit on the back end. You could consider changing the remote volume size from the default of 50MB if you want to reduce the number of files on the back end, but there really is no need if Google Drive doesn’t limit file count. If you do want to change it, I wouldn’t go too crazy by setting it too large. 250MB or 500MB might be ok if the default 50MB won’t work.

More info here: Choosing Sizes in Duplicati - Duplicati 2 User's Manual