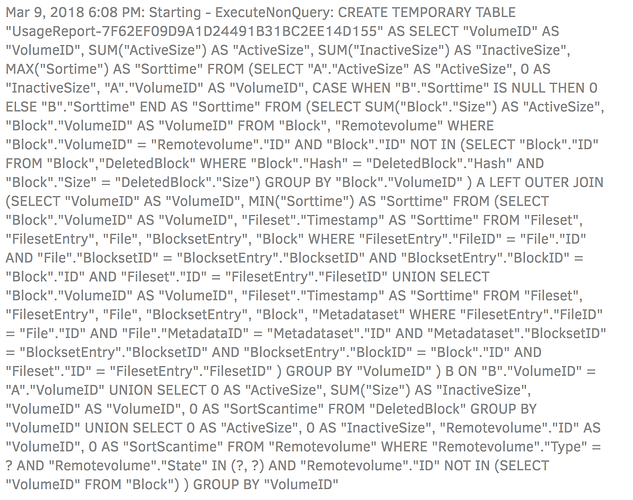

Yep, usage statistics. After I disabled it I still got log entries about SQL statements generating usage-reports, suggesting that it was still spending a significant amount of time on this step despite being configured to not send them.

Edit: Here’s a screenshot of the log during the Deleting Unwanted Files step.