If I take the above, change double double quotes to single double quotes, run it, and look, a line says

BlocksetID CalcLen Length

142091 10860672 10963072

so matches the popup error numbers reported by the earlier code quote which follows below this one.

This one took extra tracing because BlocksetEntry with filter =142091 had 108 blocks, as expected.

Numbers were sequential. Last block at 1051785 was length 6272. 107 * 102400 + 6272 = 10963072 which made everything seem nice and tidy and normal until I found that a block was behaving oddly…

EDIT:

DELETE INDEX on all 4 Block table index didn’t fix the odd SQL query result. I didn’t retry calculation.

EDIT 2:

I had SQLite “Copy as SQL” on the offending block, modified it into an UPDATE. Not sure I got syntax correct, but it didn’t help. I then tried to use SQL to DELETE the row, SELECT verified, ran an INSERT.

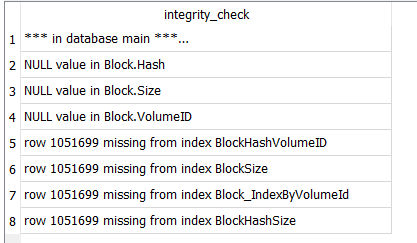

Problem persists. Took DB Browser for SQLite up on its offers for quick_check and integrity_check, got

told about NULL values that I knew about. Looking up error found source talks of NOT NULL columns.

That’s how these are defined, so NULL snuck in somehow. Closed and reopened database, tried again.

I probably didn’t get index complaints before because I had deleted them. Opening anew, they’re back.