Good day gentlemen

I received an error message when running my Duplicati backup for today. I attached an screenshot as well as the error log.

Is the error a serious one?

Has the backup operation succeeded or should I be worry about it?

Good day gentlemen

I received an error message when running my Duplicati backup for today. I attached an screenshot as well as the error log.

Is the error a serious one?

Has the backup operation succeeded or should I be worry about it?

Welcome to the forum @FSNaval

Verifying backend files found a problem. What sort of destination is this? Can you check that file’s time?

Checking the three other failed backups below it would be best. Is it the same file mentioned each time?

The verification sample of backend files is not directly connected to the backup. It might sample old files, however old files are relevant to newer backup because unchanged source data references the old files.

You can watch About → Show log → Live → Retry and at end of backup, click on a failure line for details.

Thank you for your reply.

No, it is not the same file. On the last backup operation, following your advice in viewing the log, I received the following error:

Blockquote

{“ClassName”:“Duplicati.Library.Main.BackendManager+HashMismatchException”,“Message”:“Hash mismatch on file "/tmp/dup-6b9d0566-5f70-4a1c-a08d-f37e23760f0b", recorded hash: m/xCpzanOcs5rUQXtq6sfzmMei2xasRmGwyPXPq/R2k=, actual hash WVA6eU5RoR95Lij+cd6vvJiihlxnqVR3QZRX2V0++VA=”,“Data”:null,“InnerException”:null,“HelpURL”:null,“StackTraceString”:" at Duplicati.Library.Main.BackendManager.GetForTesting (System.String remotename, System.Int64 size, System.String hash) [0x00065] in :0 \n at Duplicati.Library.Main.Operation.TestHandler.DoRun (System.Int64 samples, Duplicati.Library.Main.Database.LocalTestDatabase db, Duplicati.Library.Main.BackendManager backend) [0x0042f] in :0 ",“RemoteStackTraceString”:null,“RemoteStackIndex”:0,“ExceptionMethod”:null,“HResult”:-2146233088,“Source”:"Duplicati.

Blockquote

More broadly,what access do you have to destination and its files?

suggests that you have a remote file that has the correct size but unexpected contents.

I can’t tell if it is a recent file until you look at the timestamp, and it might not matter any.

Duplicati relies on storage being able to store and return files, but there’s an issue here.

When you say it is not the same file, note that /tmp file name is different from remote’s,

however you might find a complaint about its duplicati- file somewhere nearby in log.

I believe i have root privileges, however i will have to look into it.

MAYBE, because i run snapraid prehash and snapraid sync commands, snapraid is altering the hash of the file that duplicati expects to find?

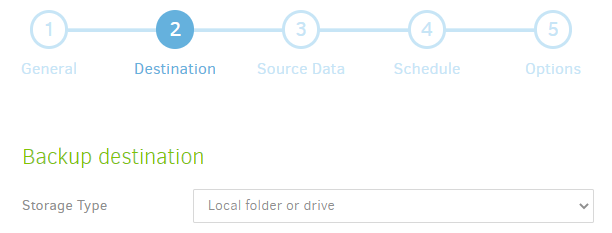

When did root privileges get mentioned,and how does it matter? On Duplicati Destination screen

for example, what is your Storage Type? If credentials are needed, Duplicati presumably has them.

No idea. I am not familiar with it, however Duplicati is not snapraid aware, and I hope snapraid is not altering file content. If you can get to the backup Destination files, you can try decrypting an example troublesome file with AES Crypt, or SharpAESCrypt.exe in Duplicati install folder (less friendly, CLI).

What OS is Duplicati installed on? Unless Windows, SharpAESCrypt.exe would be run under mono

There are ways to check all the files with Duplicati at source, or Python or PowerShell at destination, however it might not be as informative as human-led testing such as mentioned in above paragraph.

I am sorry, my misunderstanding. Duplicati destination is a local USB case with a Toshiba SSD drive

Duplicati is installed on Debian 10 running OpenMediaVault as NAS software.

It is installed using docker

Does your system have sha256sum? If so, check duplicati-b7b0e82fa5bdb4e1dbeca1471c7c910b0.dblock.zip.aes then you can give that hexadecimal value to

https://cryptii.com/pipes/binary-to-base64. pQ0Y7IaW82bYLC2aXqf9SzeXHzN8QiVfxcVoDFI0M7w=

would show that you got the same unexpected result as log did. Please also tell me that file’s length.

I should say that usually a bad encrypted file errors at decryption. Unsure why yours got a hash error.

Does your OMV system have python? If so, you can set upload-verification-file then try a backup then

verify all the files by running utility-scripts/DuplicatiVerify.py from install area and giving it backup path.

Alternatively, you can run Duplicati Commandline, change command to test, change Commandline arguments to all, maybe add number-of-retries=0 to fail faster, then see what other bad files it finds.