What does “copy” refer to? Do you mean backup (which is not copy)? Maybe this meant compact? Status bar labels don’t show all step details, but I think compact is consistent with your screenshot, because I think it runs under “Deleting unwanted files” and does Get operations. More detail below.

What does this mean? Duplicati only runs on 1 PC at a time. Below it, you point to a Get which is a download. Deleting a file would say Delete. Or does pc mean piece not Personal Computer? The remote deletes are done one at a time because many destinations can only do one delete at a time.

When running compact, the downloads probably take longer than the deletes, as it’s a data transfer. There are also uploads, which again need time. Compact runs periodically and has several settings which can be adjusted to make it more frequent and faster, or less frequent but slower (more to do).

Sometimes compact only has to do deletes, but commonly it does downloads and uploads as well.

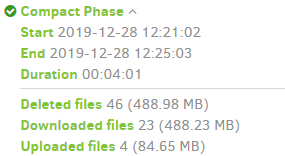

Here’s one of mine with the new log screen. If you have old screen, data is in log, but not as nicely:

The less pretty version, which I think is what beta users have:

"CompactResults": {

"DeletedFileCount": 46,

"DownloadedFileCount": 23,

"UploadedFileCount": 4,

"DeletedFileSize": 512728374,

"DownloadedFileSize": 511942187,

"UploadedFileSize": 88763042,

"Dryrun": false,

"VacuumResults": null,

"MainOperation": "Compact",

"ParsedResult": "Success",

"Version": "2.0.4.37 (2.0.4.37_canary_2019-12-12)",

"EndTime": "2019-12-28T17:25:03.1547519Z",

"BeginTime": "2019-12-28T17:21:02.0645027Z",

"Duration": "00:04:01.0902492",

Please say something about what you’re seeing. You have a log running, right? Running at Profiling level is quite a lot of output, but Information level should show the sequence of operations very well.

I forget the exact details, but I think compact (which runs under the status line banner of Deleting unwanted files and is basically the cleanup of newly-waste space caused by the version deletes)

should look like downloads of dblock files that got too empty, uploads of new dblock after repacking, then deletes of old dblocks that are now totally not needed because their blocks are in new dblocks.

You should be able to see a repeating rhythm, but I can’t tell what you see from the one screenshot. Compact - Limited / Partial is a feature request to add yet more settings in order to fine-tune the run.

What’s your update history? Did you just install a four-month-old Canary and start seeing a problem? Canary channel is named because it’s a way to test for breakage, and sometimes it does find them…

Release: 2.0.4.38 (canary) 2019-12-29 is the latest Canary, and they have become very stable lately. Problems which actually exist should be looked at, but chasing issues that are already fixed is waste.

What does “before” mean? If you forget your version history, you might be able to get some clues in the updates installed on the drive. Downgrading / reverting to a lower version shows where yours might live. Duplicati backup job log also logs the version, but the logs age away. If you keep reports, look there too.

Sometimes it’s difficult to tell the cause of changed results. It might be the version, usage, or external…

EDIT:

I don’t read topic titles literally after seeing your Duplicati broke completely, the terminator came to life, however if what happened was it sat at that exact screenshot without moving even if you refreshed the browser page then went back to Profiling log, then you could try adding –http-operation-timeout with a value higher than you think a normal upload or download has any reason to take. But this is not normal and possibly is a networking issue, or (less likely) something wrong at remote (which is Google Drive?).

EDIT 2:

If you’re going to be on Canary, please test a new one, especially if the problem repeats. Is it common?