Oke, i’ve tried it again, no luck same error in powershell and now ive enter my credentials the right way.

ok. can you just type ```

Duplicati.CommandLine.exe

in hte command line and tell me if it spits out something

I could not reply anymore as a new user… I still think im doing something wrong here as nothing works.

It exits after that last line, right?

Yes, sorry forgot to mention that.

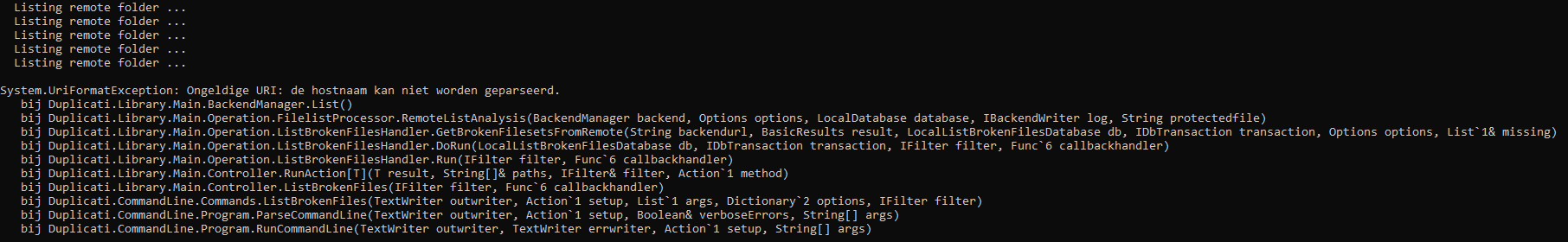

It seems that uri cant be parsed for some reason.

“System.UriFormatException: Invalid URI: The hostname could not be parsed.”

I hope someone is reading this other than me.

Can you post exact command you use in PowerShell? Note: exclude real username, password and IP.

What is the current problem you are seeing in the Duplicati Web UI? Does it still try to run your backup to STACK and get stuck?

This is the exact command i use;

"C:\Program Files\Duplicati 2\Duplicati.CommandLine.exe" list-broken-files "webdavs://<address>?auth-username=username&auth-password=password" --dbpath="C:\Users\Vincent\AppData\Local\Duplicati\DBNAME.sqlite" --passphrase=passphrase

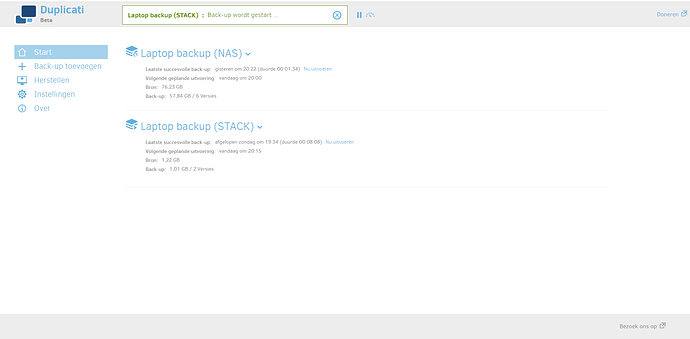

Yes when i open Duplicati Web UI it will stay on “Starting backup to STACK” and i can’t delete the whole backup or stop the process, nothing happend. Same as deleting it.

My other job, backup to nas has no issues at all.

When it appears to be stuck, go to About → Show Log → Live and set the dropdown to Verbose. See if you see any activity.

Starting Verbose log in another tab before backup might catch more if it gets stuck soon (which sounds like the case). If it gets stuck very fast, you could try Profiling level which ordinarily is too overwhelming.

Are you using VSS from –snapshot-policy? That’s one of the things that can happen in “Starting” phase.

Please also try the “Test connection” button on screen 2 Destination to be sure that connection works, however there might be different issues. In my test, a connection failure didn’t hang at “Starting backup”. “Verifying backend data” was where it paused. By then it had already done some SQL, and listed files…

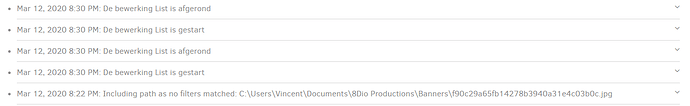

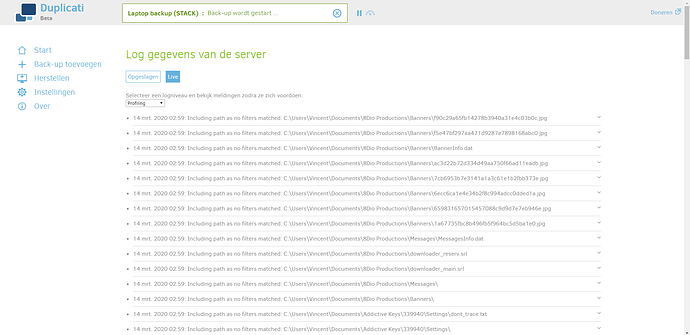

I see alot of activity, im not sure if its from the backup of STACK or from my NAS backup. But the backup to STACK is starting and this is what i see so far (sorry its in dutch/english)

It’s now stuck on “Backup is starting …”

I have no idea what u mean with snapshot policy… But what i do know is that the connection is working fine.

I can’t delete the process either to start clean with STACK, it doesnt allow me to delete or actually when i try to delete it, it wont remove.

Click the link for an explanation if you like, however it sounds like you aren’t using that option.

Snapshot is used to backup locked files by getting a “frozen” image of file data at that instant.

This can cause a noticeable delay, although I believe it’s usually just minutes unless it breaks.

“De beworking List is afgerond” is “The operation List has completed”, but I see no files count.

What other Duplicati things that use this connection are giving results showing “working fine”?

The other odd thing about your list operation is that it did two. Normal operation would do one.

Possibly it’s decided it needs some repair work, but the logging level might not be showing it…

Manually running a Database Repair (after a Duplicati kill and restart) might be a relevant test.

Manually running a Database Recreate would try to recreate database from backup if possible.

Deleting the backup and starting again would be another way to avoid any old lingering issues.

Do you refer to Duplicati’s Process in Task Manager, or Tray Icon right-click then quit, or what?

If you mean that the Stop button can’t stop the “Backup is starting”, that might be expected (but undesired) behavior when things are stuck. Although it’s a bit risky to the database health, Task Manager Details “End Task” should be able to kill all the Duplicati processes (probably just two).

If you’re trying to delete the backup job, you’re probably seeing the request get queued until the Duplicati server finishes its current backup - which isn’t finishing. Many things run one-at-a-time.

If you want to start clean, you can kill Duplicati, restart it, go to the job’s Configuration → Delete, checkmark “Delete remote files”, and answer the CAPTCHA. You can then Export your working configuration for the NAS, Import it for STACK, then change configurations a necessary for that.

https://forum.duplicati.com/search?q=stackstorage finds sample configurations others have used.

Before doing all that, it might still be worth looking at the live log Profiling view as was mentioned.

That’s almost as detailed a view as is possible. I don’t have STACK, so can’t test things for you…

Would u advise me to use this option? I didnt had any problems with Duplicati when i backup my data to my NAS. That was a 1/2 hour job and finished no issues.

What i found out, and look at this screenshot i add, on my stack everything seem there? But Duplicati keeps running and nothing happend. So i might need todo a repair from databse? When i try to delete the process, or delete the job for that matter after entering the CAPTCHA, it just brings me back to home page and nothing happens.

This is start page

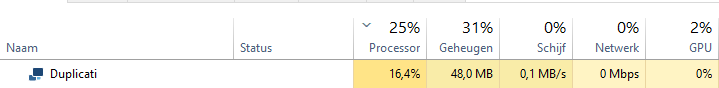

Task manager when of Duplicati, but its doing nothing…

And whats in stack

What can i do? Just try and repair database of this job or what is best todo? Sorry again for my english…

Probably best not to add this now, but if you ever have messages about locked files, it can be used.

It does get a little awkward to set up because it needs Administrator permissions or a service install.

Can you use some more words to explain what that means? I took a couple of guesses at it before.

Was the job delete after Duplicati restart, or on the hung one? If on hung, this was explained before.

You probably have to get Duplicati into an idle state (not a stuck state) before you can delete the job.

Killing all the Duplicati processes in Task Manager may be needed, then restart and verify it sits idle.

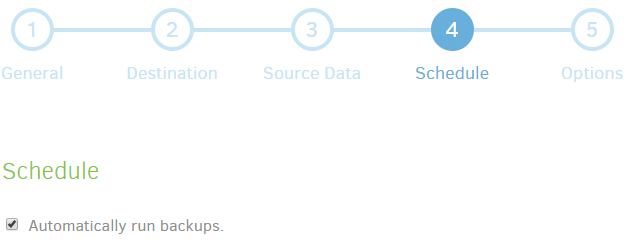

Sometimes you need to remove backup schedules (if you set those) to make sure nothing starts up.

You can certainly try it after a Duplicati restart. It’s partly a test to see what happens. Post any issues.

Recreate is probably more likely to fix DB, assuming the files on STACK are fine (still in some doubt).

Most complicated option is clean delete and restart of that backup, along with recreate of backup job.

This was also described before, along with all of these options. Please ask if you have any questions.

I would also still like to see About → Show log → Live → Profiling output if you can restart Duplicati and restart the backup while watching it that way. Seeing what it does may give clues on its situation. Until some data is available, it’s a progressive experiment. Can’t go directly to what’s best to do here.

EDIT:

For some reason the live profiling log is not showing me everything, so if you choose to collect a log instead of just trying tests first in the hope that something works, you can set Advanced options on screen 5 Options, for example –log-file to a spot you can write, and –log-file-log-level=Profiling. You probably are getting stuck soon enough to not show a lot of path names, but if you care you can edit. Your source paths are not really relevant, but some of the internal activites may show the stuck spot.

The whole problem is when i kill duplicati, and start it again… it will directly start the backup and i can’t delete it or stop it. It wont allow me to stop or delete the backup if u know what i mean? When i try to remove the backup job, i get the CAPTCHA and everything, but it will re-direct me to home page and nothing happens, also when i use the pauze mode at startup it wont let me delete the backup job.

I hope this helps? I’m not sure how i can export it otherwise?

Nothing works except the NAS backup. I don’t know what else i can do or explain.

EDIT:

I’m gonna delete all files from stack and see if that works, ill post a update

Did you turn off the schedule? Be sure you uncheck “Automatically run backups” shown below.

I think this can be done at any point even if something is running, but it will then need a restart.

Duplicati at startup will run a job that’s missed its scheduled run, but turning off its schedule stops it, meaning after that job is stopped (or Duplicati killed if necessary), the next start shouldn’t run again.

The Profiling log just stopped and sat still for awhile as shown? If so, I had hoped for a better clue…

Some relevant info might also be earlier (scrolled off). Logs can go to files too, but let’s hold off a bit.

Attached is an example log file of FTP backup of a short file. If you want to test a similar backup, you can set screen 5 Advanced options –log-file (supply a path to write) and –log-file-log-level=Profiling.

2.0.5.103_test_1 Profiling.zip (5.8 KB)

There’s a lot in the log, however much can be glossed over, for example, SELECT and INSERT to DB working would just offer assurance that database operations are finishing, i.e. that’s not hung on one.

Other highlights can be searched for in an editor such as notepad. Be careful not to give it a huge file.

2020-03-14 09:28:35 -04 - [Information-Duplicati.Library.Main.Controller-StartingOperation]: The operation Backup has started

2020-03-14 09:28:35 -04 - [Profiling-Timer.Begin-Duplicati.Library.Main.Controller-RunBackup]: Starting - Running Backup

2020-03-14 09:28:39 -04 - [Verbose-Duplicati.Library.Main.Operation.Backup.FileEnumerationProcess-IncludingSourcePath]: Including source path: C:\backup source\folder\

2020-03-14 09:28:39 -04 - [Profiling-Timer.Begin-Duplicati.Library.Main.Operation.BackupHandler-PreBackupVerify]: Starting - PreBackupVerify

2020-03-14 09:28:39 -04 - [Information-Duplicati.Library.Main.BasicResults-BackendEvent]: Backend event: List - Started: ()

2020-03-14 09:28:39 -04 - [Information-Duplicati.Library.Main.BasicResults-BackendEvent]: Backend event: List - Completed: ()

2020-03-14 09:28:39 -04 - [Profiling-Timer.Finished-Duplicati.Library.Main.Operation.BackupHandler-PreBackupVerify]: PreBackupVerify took 0:00:00:00.022

2020-03-14 09:28:39 -04 - [Profiling-Timer.Begin-Duplicati.Library.Main.Operation.BackupHandler-BackupMainOperation]: Starting - BackupMainOperation

2020-03-14 09:28:39 -04 - [Verbose-Duplicati.Library.Main.Operation.Backup.FileEnumerationProcess-IncludingSourcePath]: Including source path: C:\backup source\folder\

2020-03-14 09:28:39 -04 - [Verbose-Duplicati.Library.Main.Operation.Backup.FileEnumerationProcess-IncludingPath]: Including path as no filters matched: C:\backup source\folder\short.txt

2020-03-14 09:28:39 -04 - [Verbose-Duplicati.Library.Main.Operation.Backup.MetadataPreProcess.FileEntry-AddDirectory]: Adding directory C:\backup source\folder\

2020-03-14 09:28:39 -04 - [Verbose-Duplicati.Library.Main.Operation.Backup.FilePreFilterProcess.FileEntry-CheckFileForChanges]: Checking file for changes C:\backup source\folder\short.txt, new: True, timestamp changed: True, size changed: True, metadatachanged: True, 3/14/2020 1:25:13 PM vs 1/1/0001 12:00:00 AM

2020-03-14 09:28:39 -04 - [Verbose-Duplicati.Library.Main.Operation.Backup.FileBlockProcessor.FileEntry-NewFile]: New file C:\backup source\folder\short.txt

2020-03-14 09:28:39 -04 - [Profiling-Timer.Finished-Duplicati.Library.Main.Operation.BackupHandler-BackupMainOperation]: BackupMainOperation took 0:00:00:00.052

2020-03-14 09:28:39 -04 - [Profiling-Timer.Begin-Duplicati.Library.Main.Operation.BackupHandler-VerifyConsistency]: Starting - VerifyConsistency

2020-03-14 09:28:39 -04 - [Information-Duplicati.Library.Main.BasicResults-BackendEvent]: Backend event: Put - Started: duplicati-b57fb120ca9ed4bdfb91ff654f7d80b83.dblock.zip (986 bytes)

2020-03-14 09:28:39 -04 - [Profiling-Timer.Finished-Duplicati.Library.Main.Operation.BackupHandler-VerifyConsistency]: VerifyConsistency took 0:00:00:00.001

2020-03-14 09:28:39 -04 - [Information-Duplicati.Library.Main.BasicResults-BackendEvent]: Backend event: Put - Completed: duplicati-b57fb120ca9ed4bdfb91ff654f7d80b83.dblock.zip (986 bytes)

2020-03-14 09:28:39 -04 - [Information-Duplicati.Library.Main.BasicResults-BackendEvent]: Backend event: Put - Started: duplicati-20200314T132838Z.dlist.zip (715 bytes)

2020-03-14 09:28:39 -04 - [Information-Duplicati.Library.Main.BasicResults-BackendEvent]: Backend event: Put - Started: duplicati-i8fcc88b0556640a3889f56c738831a1a.dindex.zip (651 bytes)

2020-03-14 09:28:40 -04 - [Information-Duplicati.Library.Main.BasicResults-BackendEvent]: Backend event: Put - Completed: duplicati-20200314T132838Z.dlist.zip (715 bytes)

2020-03-14 09:28:40 -04 - [Information-Duplicati.Library.Main.BasicResults-BackendEvent]: Backend event: Put - Completed: duplicati-i8fcc88b0556640a3889f56c738831a1a.dindex.zip (651 bytes)

2020-03-14 09:28:40 -04 - [Profiling-Timer.Begin-Duplicati.Library.Main.Operation.BackupHandler-AfterBackupVerify]: Starting - AfterBackupVerify

2020-03-14 09:28:40 -04 - [Information-Duplicati.Library.Main.BasicResults-BackendEvent]: Backend event: List - Started: ()

2020-03-14 09:28:40 -04 - [Information-Duplicati.Library.Main.BasicResults-BackendEvent]: Backend event: List - Completed: (3 bytes)

2020-03-14 09:28:40 -04 - [Profiling-Timer.Finished-Duplicati.Library.Main.Operation.BackupHandler-AfterBackupVerify]: AfterBackupVerify took 0:00:00:00.436

...

Some of the clues are at relatively light log levels, such as Information. Verbose gets more source file information (and more of a privacy leak if that’s a concern), but some stages only show up at Profiling.

So another option would be to start with a tiny backup with no special options, see if it works, compare logs against the above, etc. There’s more on the log at the end, but I didn’t highlight that because your backup might not have gotten to the end. We’re still trying to find where it was when it stopped moving.

Thank you, i finally manage to remove the entire backup job by removing the scheduled run. What would u advise me? Shall i run the entire backup of 75GB or in different versions? I assume the last version would be a full version after all right?

I’m sorry for the trouble and i thank you for the effort u put into helping me. Also a thank you to everyone else that have helped me!

We never figured out the original issue, so debugging a tiny backup (if it fails) is far simpler.

If it breaks, compare logs against the one I gave to see where it broke. If it works, try larger.