Hi,

Backup details:

System: Synology DS418. Realtek rtd1296

Storage: Wasabi (S3)

Duplicati version The problem began with 2.0.5.1 but I’ve tried 2.0.6.3 and v2.0.6.103-2.0.6.103_canary_2022-06-12

There is another DS418 on the same network with the same problem. There are two DS420 on the same network with the same storage with no problems.

The backup begins to upload files and freeze in no more than 30minutes. I’ve tried to wait 5 days with no success.

2022-06-15 13:33:04 +02 - [Information-Duplicati.Library.Main.BasicResults-BackendEvent]: Backend event: Put - Started: duplicati-b3d059f1edad5475c9ca72f994106593b.dblock.zip.aes (49.99 MB)

2022-06-15 13:33:04 +02 - [Profiling-Timer.Begin-Duplicati.Library.Main.Database.ExtensionMethods-ExecuteScalarInt64]: Starting - ExecuteScalarInt64: INSERT INTO “Remotevolume” (“OperationID”, “Name”, “Type”, “State”, “Size”, “VerificationCount”, “DeleteGraceTime”) VALUES (1, “duplicati-ba52bad7c4c8a4321880748bdd26ecacf.dblock.zip.aes”, “Blocks”, “Temporary”, -1, 0, 0); SELECT last_insert_rowid();

2022-06-15 13:33:04 +02 - [Profiling-Timer.Finished-Duplicati.Library.Main.Database.ExtensionMethods-ExecuteScalarInt64]: ExecuteScalarInt64: INSERT INTO “Remotevolume” (“OperationID”, “Name”, “Type”, “State”, “Size”, “VerificationCount”, “DeleteGraceTime”) VALUES (1, “duplicati-ba52bad7c4c8a4321880748bdd26ecacf.dblock.zip.aes”, “Blocks”, “Temporary”, -1, 0, 0); SELECT last_insert_rowid(); took 0:00:00:00.000

2022-06-15 13:33:08 +02 - [Profiling-Timer.Begin-Duplicati.Library.Main.Operation.Common.DatabaseCommon-CommitTransactionAsync]: Starting - CommitAddBlockToOutputFlush

2022-06-15 13:33:09 +02 - [Profiling-Timer.Finished-Duplicati.Library.Main.Operation.Common.DatabaseCommon-CommitTransactionAsync]: CommitAddBlockToOutputFlush took 0:00:00:00.371

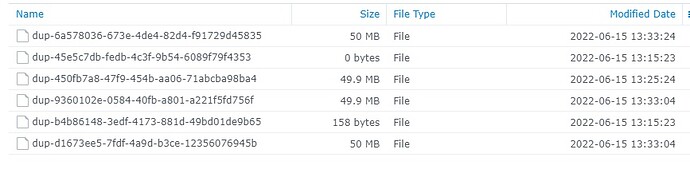

Temp dir (cleaned previous to backup):

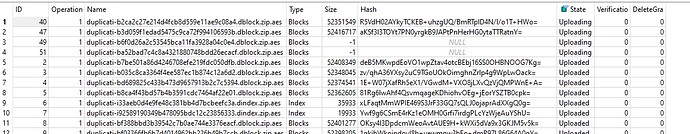

RemoteVolume table (local database and remote staorage folder were deleted previous to backup):

What I’ve tried so far:

- Update from intial 2.0.5.1 to 2.0.6.3 and to last canary

- Set http-operation-timeout to 1minute

- Changed blocksize to 10MB

It seems like connections get hung.

Any idea?