Hi ts,

Thanks again! In order to avoid confusion, I start from the beginning (and as I finally found the logs, there are a bit more details):

-

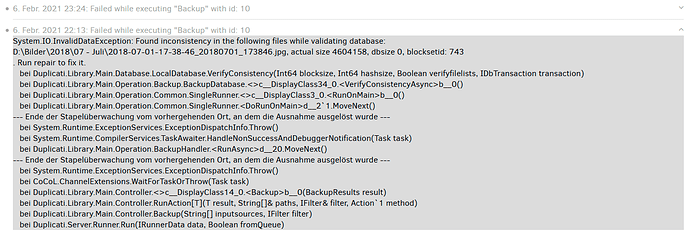

It is a first time backup (so only 1 version), 47gb picture data. Backup process stopped when nearly completed (possibly when performing a test after full backup?):

-

restart did not work, same error

-

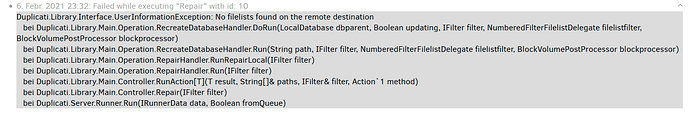

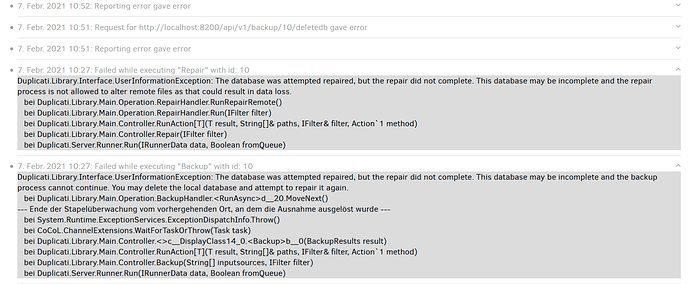

“repair” did not work:

-

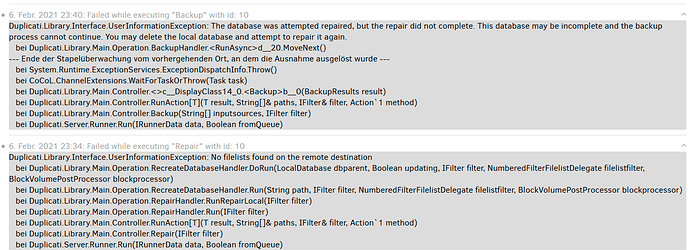

“backup” did not work either:

-

I think that at this stage (however, not sure), I deleted the db and got the “no filelist” error

-

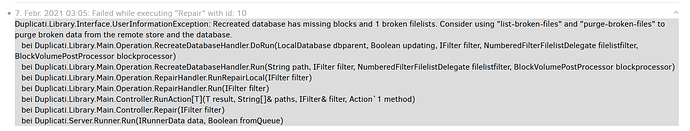

after having uploaded a dummy filelist, repair process could be startet. It ended three hours later with this error:

Afterwards, these messages when trying to repair:

Afterwards it restartet upload files to the destination.

Unfortunately, in the meantime, I have deleted the new files on the destination (from 7.February onwards) so that only the “old” pure backup